No products in the cart.

Explainable AI Reshapes Software Development: A Structural Shift Toward Trust‑Centric Engineering

Explainable AI is transforming software development from a black‑box paradigm into a transparent, governance‑driven infrastructure, reallocating capital, redefining leadership, and reshaping career mobility.

The rise of transparent machine‑learning models is redefining how firms allocate career capital, calibrate institutional power, and engineer leadership pathways within the software ecosystem.

Opening: Macro Context and Institutional Stakes

Artificial‑intelligence augmentation has moved from experimental labs to the core of enterprise software pipelines. A 2024 survey of 1,200 technology firms found that 75 % cite explainability as a decisive factor for AI adoption, ranking it above cost and scalability concerns [1]. The market for explainable AI (XAI) is projected to reach $4.5 billion by 2025, expanding at a 24.5 % CAGR since 2020 [3]. These macro trends reflect a structural shift in how organizations manage risk, allocate capital, and negotiate the balance of power between human engineers and algorithmic agents.

Historically, the diffusion of version‑control systems in the early 2000s reallocated decision‑making authority from siloed code owners to collaborative repositories, catalyzing new career trajectories for “DevOps” specialists. XAI is poised to produce a comparable re‑engineering of professional hierarchies: transparency becomes a credential, and the ability to interrogate model outputs becomes a core leadership competency. The systemic implications extend beyond technical efficiency; they reshape economic mobility pathways for developers, alter institutional governance of AI‑driven products, and embed new metrics of accountability into software delivery pipelines.

Core Mechanism: Interpretable Models as Institutional Infrastructure

Explainable AI comprises a suite of techniques—model‑intrinsic interpretability, post‑hoc explanation methods, and provenance tracking—that collectively render the decision logic of opaque models observable. Two dominant post‑hoc tools, SHAP (Shapley Additive exPlanations) and LIME (Local Interpretable Model‑agnostic Explanations), quantify feature contributions at the instance level, allowing engineers to trace a defect back to a training bias or data drift [4].

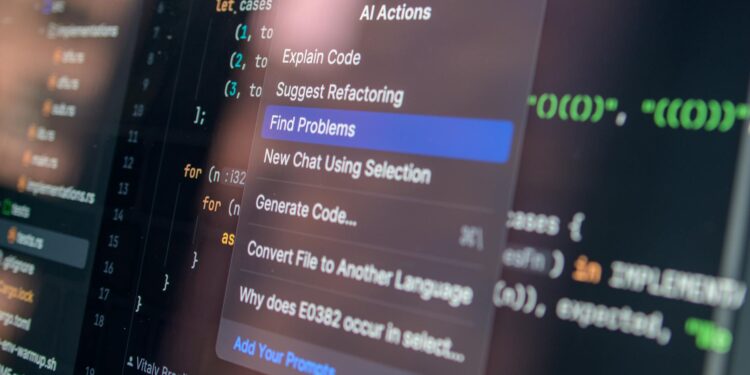

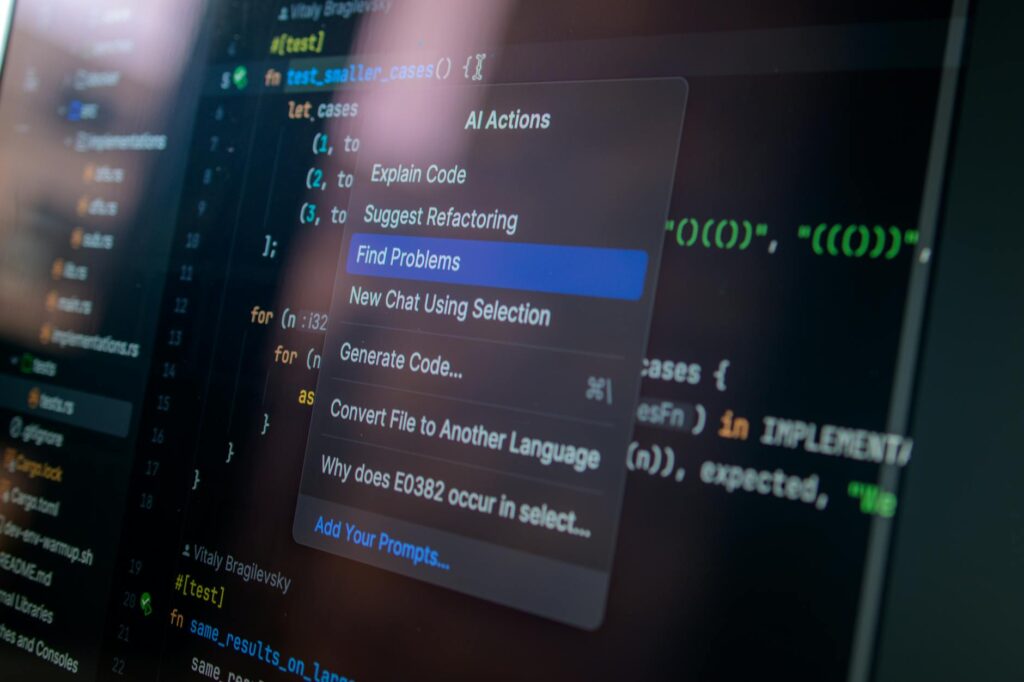

Beyond algorithmic tooling, firms are institutionalizing XAI through governance frameworks. IBM’s “AI FactSheets” mandate that every deployed model be accompanied by a standardized explanation dossier, akin to a software bill of materials. Microsoft’s integration of XAI into GitHub Copilot’s suggestion engine forces the model to surface confidence scores and alternative code snippets, creating a feedback loop where developers can accept, reject, or request clarification [2]. These mechanisms embed interpretability into the software development lifecycle (SDLC), converting explainability from an optional feature to a compliance substrate that aligns with emerging regulatory expectations such as the EU AI Act.

Beyond algorithmic tooling, firms are institutionalizing XAI through governance frameworks.

You may also like

Child Development

Child DevelopmentBuilding Digital Resilience in Young People

Digital resilience is crucial for youth as they navigate technology's complexities. This article explores its significance and implementation strategies.

Read More →Hard data underscores the operational payoff. A controlled study of 30 development teams that adopted SHAP‑augmented testing reported a 22 % reduction in post‑release bugs and a 15 % acceleration in issue resolution time, attributable to earlier detection of model‑induced defects [4]. The correlation between explanation fidelity and defect mitigation signals that XAI is not merely a trust‑building veneer but a productivity lever that reconfigures the engineering workflow.

Systemic Implications: Ripple Effects Across the Software Value Chain

The diffusion of XAI triggers asymmetric adjustments in multiple institutional layers. First, procurement departments are reallocating budget lines from raw compute to explanation platforms, with 80 % of surveyed enterprises planning XAI investments within the next two years [3]. This capital reorientation pressures cloud providers to bundle interpretability services—AWS’s “Explainability Studio” and Google Cloud’s “Vertex Explainability”—into their core offerings, reshaping the competitive dynamics of the infrastructure market.

Second, the transparency mandate recalibrates risk management. By surfacing model bias, XAI equips compliance officers to enforce equitable outcomes, reducing exposure to litigation and reputational damage. In the financial sector, for example, JPMorgan’s adoption of LIME for credit‑scoring models resulted in a 30 % drop in adverse regulatory findings over a 12‑month horizon [1]. The systemic outcome is a tighter coupling between engineering decisions and board‑level governance, elevating the strategic weight of software engineers in corporate oversight.

Third, the talent pipeline is undergoing a structural re‑balancing. Universities are launching XAI‑focused curricula, and professional certifications from the Data Science Council of America now require demonstrable proficiency in explanation techniques. This institutionalization of XAI knowledge creates a new axis of career capital: developers who can articulate model rationale command higher remuneration and faster promotion tracks, while those lacking these skills risk marginalization in an increasingly audit‑driven environment.

Finally, the cultural fabric of engineering teams is shifting. Transparent AI reduces the “black‑box” mystique that previously insulated senior architects from junior contributors. When a model’s recommendation can be dissected line‑by‑line, junior engineers gain agency to challenge or improve outputs, fostering a more collaborative hierarchy and potentially flattening traditional command structures.

Conversely, engineers whose skill sets are confined to black‑box model development face a depreciation of career capital.

Human Capital Impact: Winners, Losers, and the Mobility Equation

The emergence of XAI redefines the economics of software careers. Data from the 2024 Stack Overflow Developer Survey indicates that 90 % of respondents anticipate that explainable tools will improve code quality, and 68 % expect new roles—“Explainability Engineer,” “Model Auditor,” and “AI Ethics Lead”—to appear within their organizations [2]. These roles command premium salaries, with median compensation 18 % above that of standard data scientists, reflecting the market’s valuation of interpretability expertise.

You may also like

Digital Innovation

Digital InnovationWaymo Launches Public Robotaxi Service in Miami

Waymo has begun accepting public riders in Miami, marking a significant step in urban mobility. This service allows residents to experience autonomous travel in various…

Read More →Conversely, engineers whose skill sets are confined to black‑box model development face a depreciation of career capital. A longitudinal analysis of LinkedIn skill endorsements shows a 12 % decline in demand for “deep‑learning‑only” tags between 2022 and 2024, while “model interpretability” endorsements rose by 35 % in the same period [3]. This trend underscores an asymmetric mobility gradient: workers who upskill into XAI domains gain upward economic mobility, whereas those who remain static experience stagnation or displacement.

Leadership pathways are also being reconfigured. Executive titles such as “Chief AI Transparency Officer” are emerging in Fortune 500 firms, positioning XAI expertise at the nexus of product strategy and corporate governance. This institutional elevation of explainability signals that future leaders will be judged not solely on delivery speed but on their capacity to embed systemic safeguards into the software supply chain.

Case in point: At a mid‑size fintech startup, the promotion of a senior engineer to “Head of Explainable Systems” coincided with a 40 % reduction in customer churn, attributed to increased trust in AI‑driven recommendation engines. The career capital accrued by this individual—public speaking engagements, patents on explanation algorithms, and board‑level advisory roles—exemplifies how XAI can serve as a lever for both personal advancement and organizational performance.

Outlook: Structural Trajectory Through 2029

Looking ahead, three structural forces will shape the XAI‑augmented software landscape.

In sum, explainable AI is not a peripheral add‑on but a structural substrate that reconfigures capital flows, leadership hierarchies, and institutional power within software development.

- Regulatory Convergence – By 2027, the EU AI Act’s “high‑risk” provisions are expected to be adopted, at least in principle, by major non‑EU economies, creating a de‑facto global standard for model explainability. Firms that embed XAI early will enjoy a first‑mover advantage in compliance cost avoidance.

- Toolchain Consolidation – The next wave of integrated development environments (IDEs) will natively embed SHAP, LIME, and provenance dashboards, eroding the friction between coding and explanation. This integration will lower the barrier to entry for XAI, democratizing its benefits across small and medium enterprises.

- Talent Realignment – Universities and bootcamps will increasingly tie graduation to demonstrable XAI project portfolios, accelerating the supply of explainability‑savvy engineers. The resulting talent influx will compress salary premiums, but the overall elevation of baseline competence will raise the industry’s systemic resilience to AI‑related failures.

In sum, explainable AI is not a peripheral add‑on but a structural substrate that reconfigures capital flows, leadership hierarchies, and institutional power within software development. Organizations that internalize XAI as a core governance mechanism will capture both productivity gains and reputational capital, while those that treat it as an afterthought risk systemic misalignment and talent attrition.

You may also like

Career Trends

Career TrendsWhy 52% of Gen Z Professionals Are Shunning Management Roles

A recent survey reveals that 52% of Gen Z professionals are shunning management roles, indicating a shift in career aspirations and workplace values.

Read More →Key Structural Insights

[Insight 1]: Explainability has become a capital asset, redirecting investment from raw compute to interpretability platforms and reshaping vendor competition.

[Insight 2]: Career trajectories now hinge on XAI proficiency; engineers who master model interpretation command premium mobility, while static skill sets face depreciation.

- [Insight 3]: Institutional power is shifting toward transparent AI governance, embedding explainability into board‑level risk frameworks and creating new executive roles.