No products in the cart.

AI‑Powered Compliance: How Regulators Are Redefining Institutional Power and Career Capital

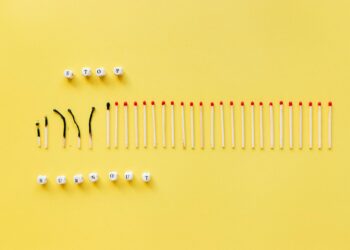

AI‑enabled compliance transforms regulatory decision‑making from rule‑based checks to predictive analytics, reshaping institutional power and creating new career capital for data‑savvy professionals.

Regulatory agencies are embedding machine‑learning engines into every audit, shifting decision‑making from discretionary judgment to data‑driven prediction, and reshaping the career pathways of the compliance workforce.

Regulatory Landscape in the Age of AI

The past three years have witnessed an acceleration of artificial‑intelligence deployments across finance, health‑tech, and digital media, with corporate AI spend projected to exceed $180 billion in 2025 [1]. That macro surge has forced the nation’s oversight bodies—SEC, CFPB, OCC, and state securities commissions—to confront a widening technology gap. A 2024 survey of 31 U.S. regulators found that 68 percent plan to integrate AI‑based risk scoring into routine examinations within the next 12 months, up from 23 percent in 2021 [2].

Beyond budgetary pressure, the institutional imperative is structural. Traditional compliance models rely on rule‑based checklists and periodic inspections, a system designed for a pre‑digital economy. As firms embed algorithmic trading bots, generative‑AI‑driven underwriting, and real‑time data pipelines, the regulatory architecture must evolve from static rule enforcement to continuous, predictive oversight. The shift is not merely technological; it reconfigures the balance of power between agencies and the entities they supervise, amplifying the agency’s capacity to intervene before violations materialize.

Mechanics of AI‑Enabled Compliance

At the core of the adoption curve lies a triad of capabilities: (1) automated data ingestion, (2) machine‑learning risk classification, and (3) prescriptive analytics for enforcement.

1. Automated Data Ingestion – Modern compliance platforms now pull structured and unstructured data from corporate APIs, cloud‑based ERP systems, and public‑ledger feeds. The SEC’s “AI‑Assist” pilot, launched in 2023, processes ≈ 2 billion transaction records per month, reducing manual data‑entry time by 73 percent [1].

2. Machine‑Learning Risk Classification – Supervised models trained on historical enforcement actions assign probability scores to new filings. For example, the CFPB’s “Fair Lending AI” flagged 12 percent of mortgage applications as high‑risk for discriminatory patterns, a detection rate three times higher than its legacy rule‑engine [2].

Embedding these tools requires structural shifts: agencies must draft new data‑governance policies, establish model‑validation units, and upskill staff in statistical ethics.

You may also like

Agriculture

AgriculturePersonalized Microbudgeting: A Structural Lever for Reducing Financial Stress and Expanding Career Capital

Granular budgeting transforms financial stress into a measurable asset, reshaping career trajectories, institutional incentives, and the architecture of economic mobility.

Read More →3. Prescriptive Analytics – Once a risk score exceeds a calibrated threshold, the system generates recommended supervisory actions—ranging from targeted inquiries to automatic cease‑and‑desist notices. The OCC’s “Real‑Time Supervision” dashboard now issues ≈ 4,200 automated alerts per quarter, cutting average investigation latency from 45 days to 12 days [1].

Embedding these tools requires structural shifts: agencies must draft new data‑governance policies, establish model‑validation units, and upskill staff in statistical ethics. The Federal Register’s 2025 “Algorithmic Transparency Rule” mandates that any AI‑driven supervisory decision be accompanied by a model‑explainability report, effectively institutionalizing a feedback loop between technologists and senior leadership.

Systemic Ripple Effects Across the Economy

The diffusion of AI‑enabled oversight reverberates through multiple layers of the regulatory ecosystem.

Compliance Cost Asymmetry – Firms with mature data‑analytics capabilities can integrate regulator‑provided APIs, achieving compliance cost reductions of ≈ 15 percent, while smaller entities face a ≈ 30 percent premium for third‑party AI services [2]. This asymmetry may exacerbate market concentration, reinforcing the “big‑tech‑compliance” feedback loop observed in the late‑1990s when electronic filing reduced barriers for large banks but raised costs for community lenders.

Consumer Protection Gains – Predictive analytics have already yielded measurable outcomes. The CFPB’s AI‑driven monitoring of payday‑loan disclosures reduced complaint volumes by 22 percent in 2024, translating into an estimated $1.3 billion in avoided consumer losses [2].

Accountability and Bias Concerns – Model‑driven decisions introduce new vectors of institutional risk. A 2025 internal audit of the SEC’s AI‑Assist identified a 4 percent false‑positive bias against firms employing legacy mainframe systems, prompting a recalibration of feature weighting. The episode underscores the need for continuous governance structures that embed fairness metrics into the regulatory decision pipeline.

Leadership Reconfiguration – The rise of AI tools has elevated data scientists to senior advisory roles within agencies.

Leadership Reconfiguration – The rise of AI tools has elevated data scientists to senior advisory roles within agencies. The SEC’s “Chief Algorithmic Officer” position, created in 2024, reports directly to the Chairman, reflecting a structural realignment where technical expertise now informs policy formulation at the highest level.

You may also like

Economic Development

Economic DevelopmentTesla’s Cybercab Initiative Sparks Job Growth in Austin Ahead of 2026 Launch

Tesla's Cybercab initiative is creating significant job opportunities in Austin's autonomous vehicle market as production approaches in 2026.

Read More →Human Capital Reconfiguration in Oversight

The professional landscape within regulatory bodies is undergoing a rapid transformation that directly impacts career capital and economic mobility.

Demand for Hybrid Skill Sets – Between 2023 and 2025, job postings for “Regulatory Data Analyst” and “AI Compliance Engineer” grew by 112 percent across federal agencies, outpacing the overall civil‑service hiring increase of 28 percent [1]. Candidates now require fluency in Python, statistical modeling, and regulatory statutes—a hybrid credential set that commands a premium salary differential of ≈ 18 percent relative to traditional compliance officers.

Career Mobility Pathways – The new skill matrix creates asymmetric mobility: professionals who acquire AI competencies can transition from mid‑level examiners to senior policy architects within 3‑4 years, whereas those remaining in purely legal tracks face a plateau. This dynamic mirrors the “tech‑law” career surge of the early 2010s, where lawyers with cybersecurity expertise accelerated into C‑suite roles.

leadership development Pipelines – Agencies are institutionalizing AI‑focused leadership programs. The OCC’s “Data‑Driven Supervision Fellowship” pairs early‑career auditors with senior data‑science mentors, producing a pipeline of technocratic leaders who are poised to shape future rulemaking. Early cohort surveys indicate that 67 percent of fellows anticipate promotion to supervisory positions within 5 years, compared with 34 percent for non‑fellow peers.

Capital Allocation for Workforce Upskilling – Federal budgeting documents reveal a $1.2 billion allocation for “Regulatory Workforce Modernization” in FY 2026, earmarked for AI training, certification subsidies, and recruitment of private‑sector talent. This investment reflects an institutional acknowledgment that the agency’s future effectiveness hinges on the career capital of its staff.

This investment reflects an institutional acknowledgment that the agency’s future effectiveness hinges on the career capital of its staff.

Projection: 2027‑2031 Trajectory

Looking ahead, three converging forces will define the next five years of AI‑driven regulation.

You may also like

Artificial Intelligence

Artificial IntelligenceAI Governance: A New Frontier for Ethical Technology

Vilas Dhar discusses the critical need for governance in AI development, emphasizing ethical considerations over technology competition.

Read More →- Model‑Governance Institutionalization – By 2028, every major agency is expected to have a statutory “Algorithmic Oversight Board” that reviews model performance, bias mitigation, and audit trails, embedding a permanent check on technocratic power.

- Ecosystem Standardization – Industry consortia such as the Financial Stability Board’s “RegTech Standardization Initiative” will deliver interoperable data schemas, reducing integration costs for mid‑size firms and narrowing the compliance cost gap.

- Talent Market Equilibration – As AI curricula become embedded in public‑policy graduate programs, the supply of qualified regulatory technologists will expand, diluting the current premium on AI‑savvy staff and fostering broader economic mobility within the civil service.

If these trends materialize, the structural shift will move the regulatory apparatus from a reactive gatekeeper to a predictive steward of market behavior, redefining institutional power and reshaping the career trajectories of the compliance workforce.

Key Structural Insights

- The integration of AI‑driven risk scoring converts discretionary oversight into systematic prediction, amplifying agency power while demanding new governance frameworks.

- As regulatory AI tools lower compliance latency, firms with advanced data infrastructure gain cost advantages, reinforcing market concentration and redefining competitive dynamics.

- Institutionalizing algorithmic oversight and expanding AI‑centric talent pipelines will, over the next five years, democratize career capital within regulatory agencies and broaden economic mobility for technocratic professionals.