No products in the cart.

Google Removes AI Health Advice Feature Amid Safety Concerns

Google's 'What People Suggest' feature, aimed at crowd-sourcing medical advice, was removed due to safety issues and public backlash over misinformation.

“`html

When Google launched “What People Suggest,” it aimed to provide personal stories alongside standard search results. This feature promised to use AI to sift through billions of experiences, offering advice that felt more human. However, just weeks later, it disappeared quietly from the search interface. Its short existence and the ensuing controversy highlight significant issues in combining AI with health information, raising concerns about responsibility and transparency in Google’s AI efforts.

The Rise and Fall of ‘What People Suggest’

From hopeful launch to quiet disappearance

Google introduced “What People Suggest” as part of a simplified search page, intending to share tips from people with similar experiences. This feature appeared in AI-driven panels above traditional results, reaching about two billion users monthly. Google claimed it could democratize health advice by turning collective wisdom into a searchable resource.

However, within a month, the feature was removed. A Google spokesperson stated this was part of a broader simplification and not due to quality concerns. This brief explanation left many wondering if the removal was a response to growing criticism.

Why the feature mattered

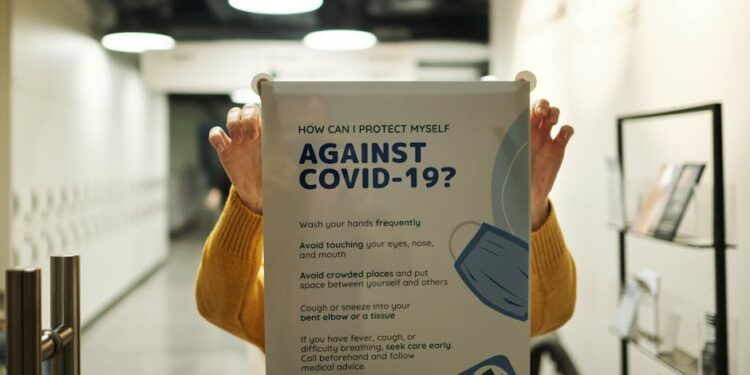

At its core, “What People Suggest” aimed to blend crowd-sourced stories with algorithmic accuracy. Traditional online medical advice has typically come from professional sources like peer-reviewed articles and official health guidelines. By mixing personal anecdotes with expert advice, Google blurred the line between expert consensus and personal experience. While users might find comfort in hearing from “someone like me,” the risk of misinformation was significant.

Traditional online medical advice has typically come from professional sources like peer-reviewed articles and official health guidelines.

Safety Concerns: The Backlash Against AI Health Advice

Guardian investigation uncovers systemic risk

In January, The Guardian reported that Google’s AI Overviews were providing false health information. The investigation found instances where AI-generated summaries contradicted established medical guidance, potentially endangering users. Since these overviews appear above organic search results, they carry a significant level of trust, amplifying any errors.

You may also like

Career Tips

Career TipsResume makeover: Add these skills to increase your hiring potential

Kai Tanaka Including the right skills on your resume is important for standing out among other job applicants and increasing your chances of getting hired.…

Read More →The report noted that these AI Overviews reach around two billion people each month, highlighting their influence on public health. Although Google initially downplayed the findings, it later removed AI Overviews for some medical queries, acknowledging the risks involved.

Expert warnings and regulatory scrutiny

Medical professionals have warned that AI health content can confuse the public about credible information. The Guardian’s findings align with ongoing regulatory discussions in the U.S. and Europe, where lawmakers are examining the liability of tech companies providing medical advice. Following the investigation, Google faced increased scrutiny from consumer protection agencies demanding more transparency in how AI models source and rank health content.

Critics argue that the “crowd-sourced” model of “What People Suggest” heightened these concerns. Unlike curated medical databases, user-generated advice lacks systematic verification. Even with AI moderation, the volume of contributions makes thorough fact-checking impractical, allowing inaccurate tips to spread alongside reliable information.

What This Means for Google’s AI Future

Recalibrating ambition with caution

The removal of “What People Suggest” marks a strategic shift for Google. While its AI ambitions remain strong—such as the Gemini model powering experiences across Search and Workspace—the incident suggests a growing awareness that unchecked health-related AI features can damage user trust and invite regulatory scrutiny.

Moving forward, Google will likely adopt a more cautious approach, emphasizing “human-in-the-loop” oversight for health content. This may include closer ties with medical institutions, real-time verification of AI claims, and clearer labeling to differentiate anecdotal advice from evidence-based guidance. The recent statements about simplifying the search page may hide a deeper reassessment of how AI features are curated and presented.

Expert warnings and regulatory scrutiny Medical professionals have warned that AI health content can confuse the public about credible information.

Implications for the broader AI-health ecosystem

Google’s withdrawal does not lessen the demand for AI health tools; it emphasizes the need for strong safeguards. Start-ups and established health-tech firms will likely focus on transparency and rigorous clinical validation before scaling AI advice. Policymakers may also take this opportunity to create clearer standards for AI-generated medical content, balancing innovation with public safety.

You may also like

Business Innovation

Business InnovationThe Structural Trade‑off of Remote Freedom: How Digital Nomadism Reshapes Career Capital and Self‑Care

Digital nomadism is recasting career capital by embedding flexibility into formal contracts while simultaneously shifting the burden of self‑care onto individuals, creating a bifurcated labor…

Read More →This situation serves as a reminder that AI convenience does not replace professional medical judgment. As search engines increasingly deliver health information, both providers and consumers must maintain a healthy skepticism.

Google’s choice to remove “What People Suggest” may seem like a minor change, but it has significant implications for the tech industry. It highlights a crucial truth: as algorithms shift from answering “what is” to advising “what to do,” the stakes become much higher. The next phase of Google’s AI journey will depend not just on data breadth but also on depth of responsibility.

“`