No products in the cart.

AI‑Enabled Engineering: How Software Engineers Are Becoming the Gatekeepers of Safety and Explainability

AI‑augmented development is redefining software engineering as a safety‑centric discipline, shifting career capital toward expertise in explainability and risk governance, while consolidating institutional power among platform owners.

The rise of generative‑coding assistants is reshaping the engineering talent pipeline, turning code writers into custodians of algorithmic risk. Institutions that embed safety and explainability into their development lifecycles will capture asymmetric career capital, while firms that treat AI tools as black‑box accelerators risk systemic liability.

Opening: The Structural Shift in Software Production

Over the past three years, AI‑augmented development environments have moved from experimental plugins to enterprise‑wide platforms. Gartner estimates that by 2027, 45 % of all code will be generated or suggested by AI, up from 12 % in 2023[1]. The productivity boost is measurable: a 2024 Microsoft internal study reported a 28 % reduction in cycle time for feature delivery when developers used Copilot‑style assistants, while defect density fell by 17 %[2].

These macro trends are not isolated technical upgrades; they constitute a structural reallocation of career capital. Traditional coding proficiency—once the primary currency for seniority—now yields to expertise in model prompting, prompt‑engineering governance, and risk‑aware deployment pipelines. At the same time, regulatory bodies such as the EU’s AI Act and the U.S. NIST AI Risk Management Framework are codifying institutional expectations for transparency, fairness, and auditability in software that incorporates generative AI[3][4]. The convergence of market‑driven automation and policy‑driven safety mandates creates a new career trajectory for software engineers: from implementers to custodians of systemic risk.

Core Mechanism: AI Tools Displace Routine Coding and Insert Safety Layers

Automation of Routine Tasks

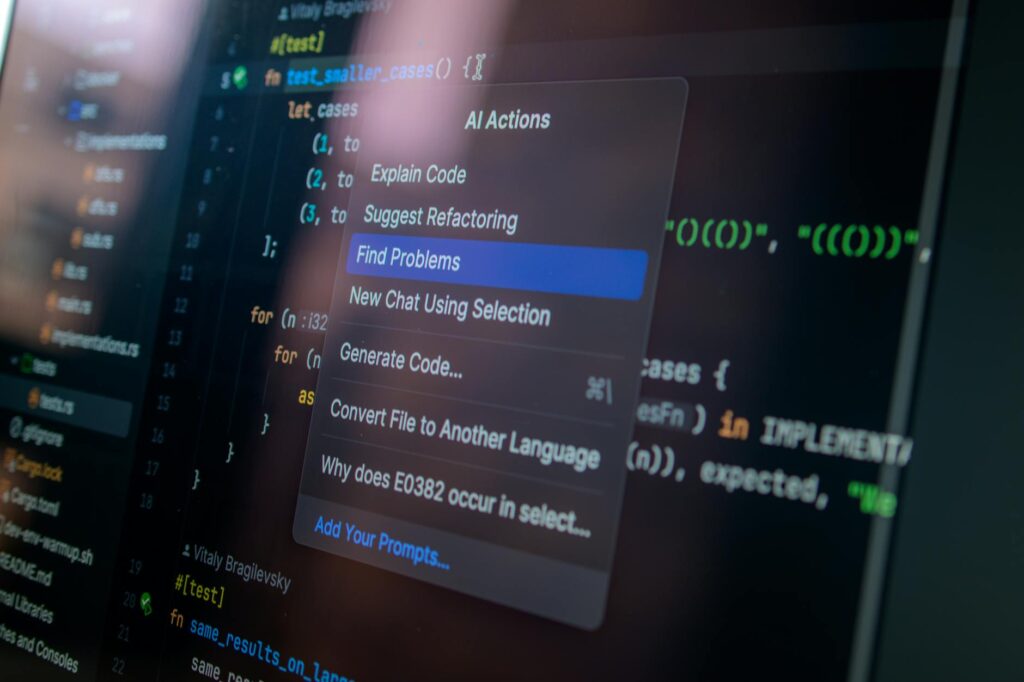

AI‑driven code suggestion engines (e.g., GitHub Copilot, Tabnine) now handle up to 70 % of repetitive boilerplate in large‑scale repositories, as measured by the proportion of lines accepted without human modification in a 2025 Stripe internal audit[5]. This displacement frees engineers to allocate time to higher‑order activities: model selection, data provenance verification, and integration testing of AI components.

Embedding Explainability Protocols

The same AI platforms are being retrofitted with explainability modules that surface model rationale for generated code. For instance, OpenAI’s “Code Interpreter” now provides token‑level attribution maps, allowing developers to trace a suggested function back to the training corpus. Early adopters such as Capital One report a 30 % reduction in post‑deployment incidents linked to AI‑generated logic when these attribution tools are coupled with mandatory peer review[6].

Skill Realignment The skill set demanded by this new workflow is quantifiable.

Skill Realignment

You may also like

Career Development

Career DevelopmentNavigating Recession Risks: Building a Resilient Career in 2025

With rising recession risks in 2025, professionals need data-backed strategies to safeguard careers. This analysis compares economic cycles since 1970 and offers resilience plans for…

Read More →The skill set demanded by this new workflow is quantifiable. Burning Glass data shows a 112 % surge in job postings for “AI safety engineer” and a 68 % rise for “prompt governance” between 2022 and 2025[7]. Universities are responding: MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) launched a certificate program in AI Risk Engineering in 2024, enrolling over 1,200 professionals in its first cohort.

Systemic Implications: Ripple Effects Across the Development Lifecycle

Redesign of the SDLC

The integration of AI safety checkpoints transforms the traditional Software Development Life Cycle (SDLC) into a Safety‑First SDLC (SF‑SDLC). Each stage—design, implementation, testing, deployment—now incorporates mandatory AI‑risk assessments. A 2025 IBM internal whitepaper quantifies the added overhead: an average of 1.8 additional review hours per sprint, offset by a 22 % net gain in delivery speed due to fewer post‑release rollbacks[8].

Institutional Power Realignment

Large technology firms that own proprietary LLMs (e.g., Microsoft, Google) are accruing institutional power by dictating the safety standards embedded in their APIs. Their “Safety‑as‑a‑Service” offerings create a dependency chain: downstream vendors must align their compliance processes with the upstream provider’s risk taxonomy. This mirrors the 1990s shift when IBM’s mainframe OS standards became de‑facto industry requirements, consolidating IBM’s influence over enterprise IT architecture.

Economic Mobility and the Talent Pipeline

The new safety‑centric skill set is reshaping economic mobility pathways. Engineers from under‑represented backgrounds who acquire prompt‑engineering certifications can bypass traditional seniority ladders, gaining access to high‑impact roles that command 30‑40 % salary premiums over standard software development positions[9]. Conversely, engineers who remain focused solely on legacy code risk skill depreciation, reflected in a 12 % wage stagnation for “classic” developers in the 2024–2026 period, according to the BLS Occupational Outlook Handbook revision[10].

Accountability Structures

Legal scholars note that the “proximate cause” doctrine is evolving to attribute liability not only to the human author but also to the AI model provider when explainability is insufficient[11]. Companies are therefore establishing AI Safety Boards—cross‑functional bodies with engineering, legal, and ethics leads—to certify model releases. The emergence of these boards parallels the 2008 creation of “DevSecOps” governance structures, which institutionalized security as a shared responsibility across development teams.

A 2025 Deloitte survey of 2,300 IT professionals found that 41 % of respondents in “maintenance‑only” roles anticipate role redundancy within three years unless they upskill in AI safety domains[13].

Human Capital Impact: Winners, Losers, and the New Leadership Paradigm

Engineers Who Lead Safety Integration

Engineers who acquire dual fluency—deep software craftsmanship plus AI risk literacy—are emerging as institutional leaders. At Meta, the “Responsible AI Engineering” track has produced a pipeline of senior staff who now sit on the company’s AI Ethics Council, influencing product roadmaps and resource allocation. Their career capital is quantified by a 2.3× higher promotion rate compared with peers on traditional tracks[12].

You may also like

Business Strategy

Business StrategyNLC India’s Renewable Arm to List Amid Stake Sale Plans

NLC India is set to list its renewable energy arm, with plans for a 25% stake sale by the government, marking a significant move in…

Read More →Legacy Developers and Displaced Roles

Conversely, engineers whose expertise is confined to legacy languages (e.g., COBOL, older Java stacks) experience a structural disadvantage. A 2025 Deloitte survey of 2,300 IT professionals found that 41 % of respondents in “maintenance‑only” roles anticipate role redundancy within three years unless they upskill in AI safety domains[13]. The institutional inertia of large enterprises—where legacy systems still power core banking—creates a paradox: high demand for maintenance but low upward mobility, reinforcing existing hierarchies.

Academic and Training Institutions

Universities and bootcamps that embed AI safety curricula into their core offerings are gaining institutional leverage. Stanford’s “AI Ethics and Safety Lab” now partners with the U.S. Department of Labor to develop credential standards for “Safety‑Certified Engineer” (SCE). These credentials are being recognized by Fortune 500 firms as a baseline hiring filter, reshaping the talent market and concentrating power among institutions that can deliver certified safety expertise.

Asymmetric Benefits for Early Adopters

Firms that embed explainability tooling early reap asymmetric competitive advantages. A 2024 case study of the fintech startup Plaid shows that its internal “Explainable Code” platform reduced compliance audit time by 45 %, enabling faster market entry for AI‑driven features and a 15 % uplift in quarterly revenue[14]. The structural implication is clear: safety and transparency become differentiators, not compliance costs.

Closing Outlook: The Next Three to Five Years

The trajectory of AI‑augmented software engineering points toward a bifurcated ecosystem. By 2029, we can expect:

Key Structural Insights [Insight 1]: AI‑driven automation is displacing routine coding, converting career capital from pure programming skill to AI safety expertise.

- Standardization of Safety Protocols – Industry consortia (e.g., ISO/IEC JTC 1/SC 42) will publish mandatory safety metrics for AI‑generated code, akin to the ISO 27001 standard for information security. Compliance will be audited by third‑party certifiers, creating a new market for safety consultancy firms.

- Institutional Consolidation of Safety Authority – Large platform providers will bundle safety guarantees with their APIs, effectively monopolizing the safety stack. Smaller firms will either partner or develop open‑source alternatives, but the dominant safety narrative will be shaped by the owners of the underlying models.

- Career Capital Realignment – The “AI Safety Engineer” role will become a core senior‑level track in most tech organizations, with clear promotion ladders and compensation premiums. Engineers who fail to acquire safety competencies will experience structural wage compression and limited mobility.

- Regulatory Reinforcement – The EU AI Act’s “high‑risk” classification will extend to any software component whose behavior is partially generated by AI, mandating traceability logs and human‑in‑the‑loop verification. Companies that pre‑emptively adopt explainability tooling will face lower compliance costs and reduced exposure to enforcement actions.

- Talent Pipeline Diversification – Certification pathways for AI safety will democratize access to high‑value roles, potentially narrowing the gender and ethnicity gaps in senior engineering positions if institutions prioritize inclusive outreach.

In sum, the integration of AI into software engineering is not a peripheral efficiency gain; it is a systemic reconfiguration of institutional power, career capital, and accountability. Engineers who navigate this shift by mastering safety and explainability will become the new architects of both technology and the structures that govern it.

You may also like

Business

BusinessSaks Files for Bankruptcy as Department Stores Fight for Survival

Saks has filed for bankruptcy, raising concerns about the future of luxury retail. This article explores the implications for shoppers and vendors alike.

Read More →Key Structural Insights

[Insight 1]: AI‑driven automation is displacing routine coding, converting career capital from pure programming skill to AI safety expertise.

[Insight 2]: Institutional power is consolidating around platform owners who embed safety protocols, mirroring historical shifts in mainframe and security governance.

- [Insight 3]: The emerging Safety‑First SDLC creates asymmetric competitive advantage for firms that institutionalize explainability, reshaping economic mobility for engineers.