No products in the cart.

The Download: an exclusive Jeff VanderMeer story and AI models too scary to release

In recent discussions surrounding artificial intelligence, a striking tension has emerged. The allure of advanced AI models is tempered by the fear of their potential misuse. This conflict came to a head when OpenAI and Anthropic decided to restrict the release of certain AI models due to security concerns.

In recent discussions surrounding artificial intelligence, a striking tension has emerged. The allure of advanced AI models is tempered by the fear of their potential misuse. This conflict came to a head when OpenAI and Anthropic decided to restrict the release of certain AI models due to security concerns. The implications of this decision ripple through technology, policy, and society.

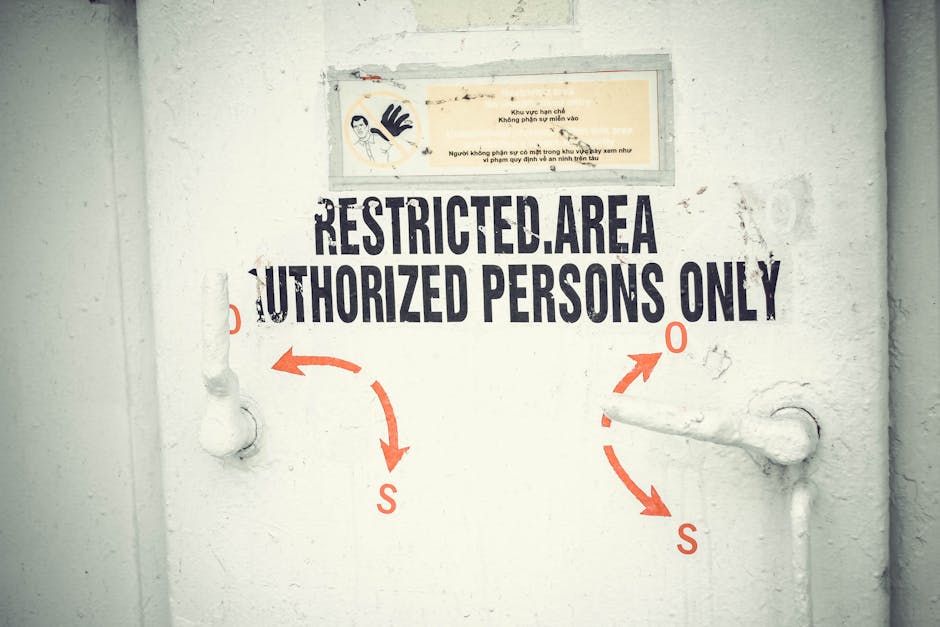

The decision to limit AI model access stems from a growing recognition of the risks associated with powerful AI tools. OpenAI’s move, reported by multiple sources, signals a shift in how technology companies approach the deployment of AI. As these models become increasingly sophisticated, the potential for harm rises, leading to a reconsideration of how they are shared and utilized.

This narrative is not just about corporate caution; it reflects broader societal anxieties about AI. With incidents of AI being implicated in harmful actions, such as a recent case in Florida where ChatGPT was allegedly involved in planning a mass shooting, the stakes have never been higher. The decision to restrict access to certain AI tools is a response to these fears, but it also raises important questions about transparency and innovation.

The Risks and Benefits of AI: A Delicate Balance

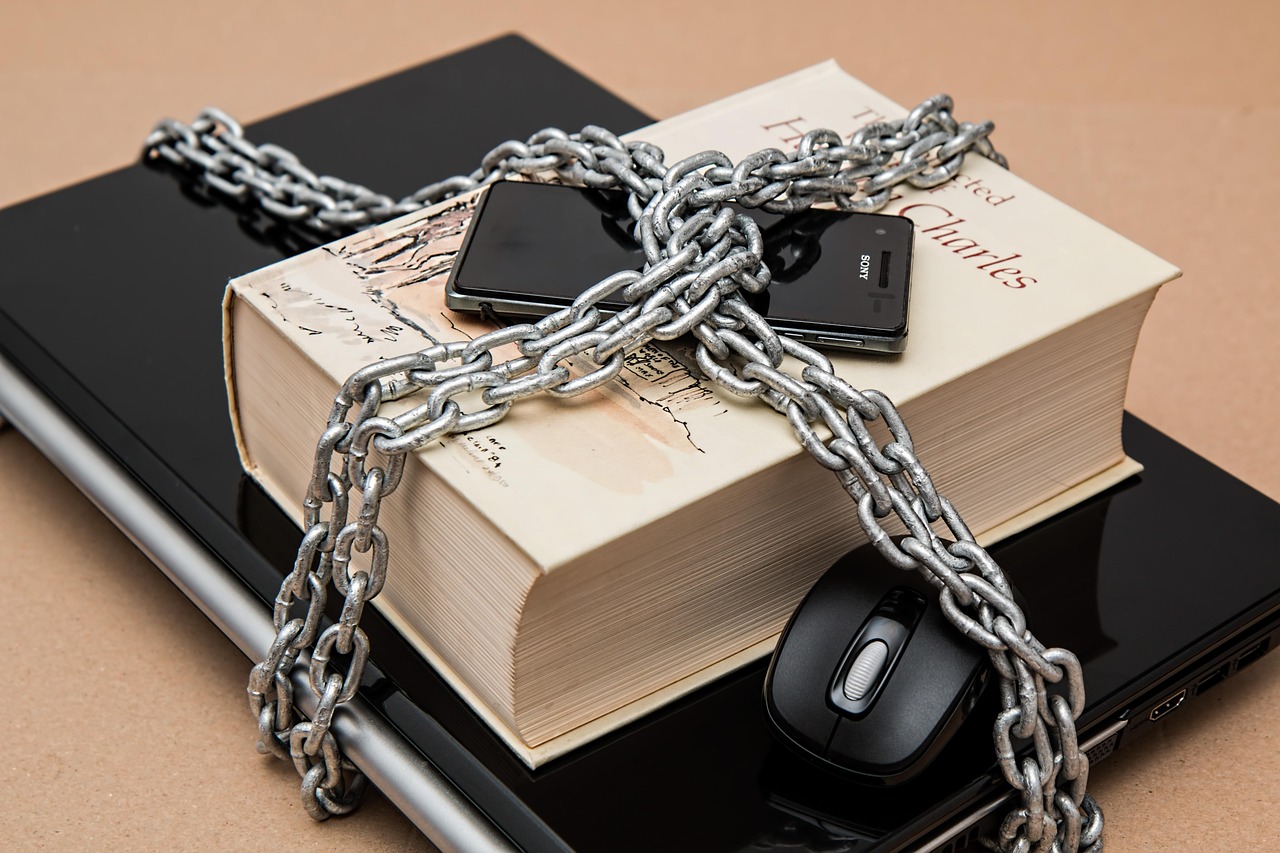

According to a report from NBC News, the fear is that limiting access may create a divide between those who can afford to develop AI safely and those who cannot. This highlights a fundamental contradiction in the current discourse: how do we balance the fear of misuse with the need for progress? Some experts believe that the potential benefits of AI far outweigh the risks. They argue that by restricting access, we may miss out on groundbreaking advancements that could improve lives and solve pressing global challenges.

The answer may not be straightforward, as it involves navigating a complex landscape of ethical considerations and regulatory frameworks.

AI’s role in various sectors, from healthcare to finance, underscores its potential to drive significant advancements. However, as companies like OpenAI and Anthropic tread carefully, the question arises: how do we foster innovation while ensuring safety? The answer may not be straightforward, as it involves navigating a complex landscape of ethical considerations and regulatory frameworks.

You may also like

Career Advice

Career AdviceThe Rising Importance of Soft Skills in Recruitment

Soft skills are becoming indispensable in today's job market, shaping recruitment strategies and career success.

Read More →Regulatory Challenges and Industry Response

The MIT Technology Review reported that OpenAI has joined Anthropic in curbing the release of an AI model over security fears. This decision reflects a critical moment in the evolution of technology. As we confront the complexities of AI, it is essential to engage in thoughtful discussions about its implications. By balancing innovation with safety, we can navigate the challenges ahead and unlock the full potential of artificial intelligence.

Moreover, the implications of these restrictions extend beyond the tech industry. As AI becomes integral to various fields, the potential for misuse grows. This reality has prompted calls for clearer regulations and ethical guidelines governing AI development and deployment. The challenge lies in creating a framework that encourages innovation while safeguarding against potential threats.

Collaboration Between Stakeholders

As we examine the future of AI, it is crucial to consider the role of policymakers and industry leaders in shaping this landscape. Collaboration between these stakeholders is essential to address the multifaceted challenges posed by AI. Without a concerted effort, the risk of harmful applications could overshadow the benefits that AI promises to deliver.

For instance, the recent investigation in Florida regarding AI’s alleged involvement in a mass shooting highlights the urgent need for a robust dialogue between tech companies and regulatory bodies. This incident serves as a reminder that while AI has the potential to revolutionize industries, it also poses significant risks that must be managed responsibly.

Collaboration Between Stakeholders As we examine the future of AI, it is crucial to consider the role of policymakers and industry leaders in shaping this landscape.

Risks, Trade-Offs, and What Comes Next

In conclusion, the decision by OpenAI and Anthropic to restrict access to certain AI models underscores the growing recognition of the risks associated with advanced AI technologies. As we move forward, it is imperative to strike a balance between fostering innovation and ensuring safety. The future of AI will depend on our ability to navigate these challenges collaboratively, ensuring that the technology serves humanity rather than endangers it.

You may also like

Business Innovation

Business InnovationOpen‑Source Revival: How Audits Are Re‑writing the Software Playbook

Algorithmic audits have exposed hidden flaws in proprietary code, prompting a surge of developers and firms toward open‑source projects on GitHub. The shift promises more…

Read More →Sources:MIT Technology Review, Bloomberg, BBC.